Architecture

This article describes the recommended architecture for infrastructure that should be prepared for CKEditor AI On-Premises to run smoothly.

The following setup is recommended but not obligatory. CKEditor AI On-Premises can run as a single instance on one server, but it also supports multi-instance deployments behind a load balancer.

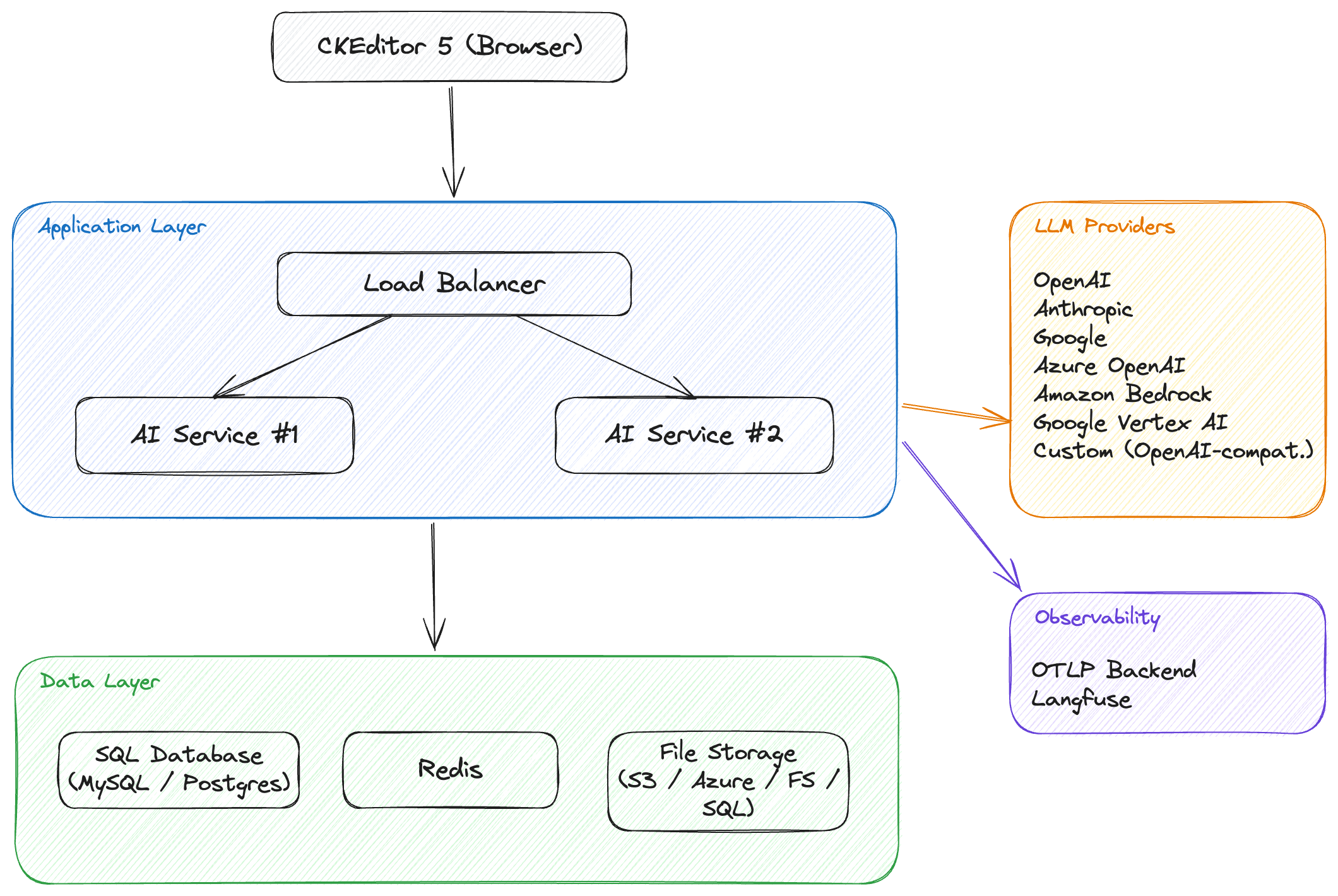

The CKEditor AI On-Premises infrastructure consists of two layers. The application layer runs the AI service provided as a Docker image. The data layer hosts the SQL database, Redis, and file storage. The application layer communicates with LLM providers (such as OpenAI, Anthropic, or Google) to process AI requests.

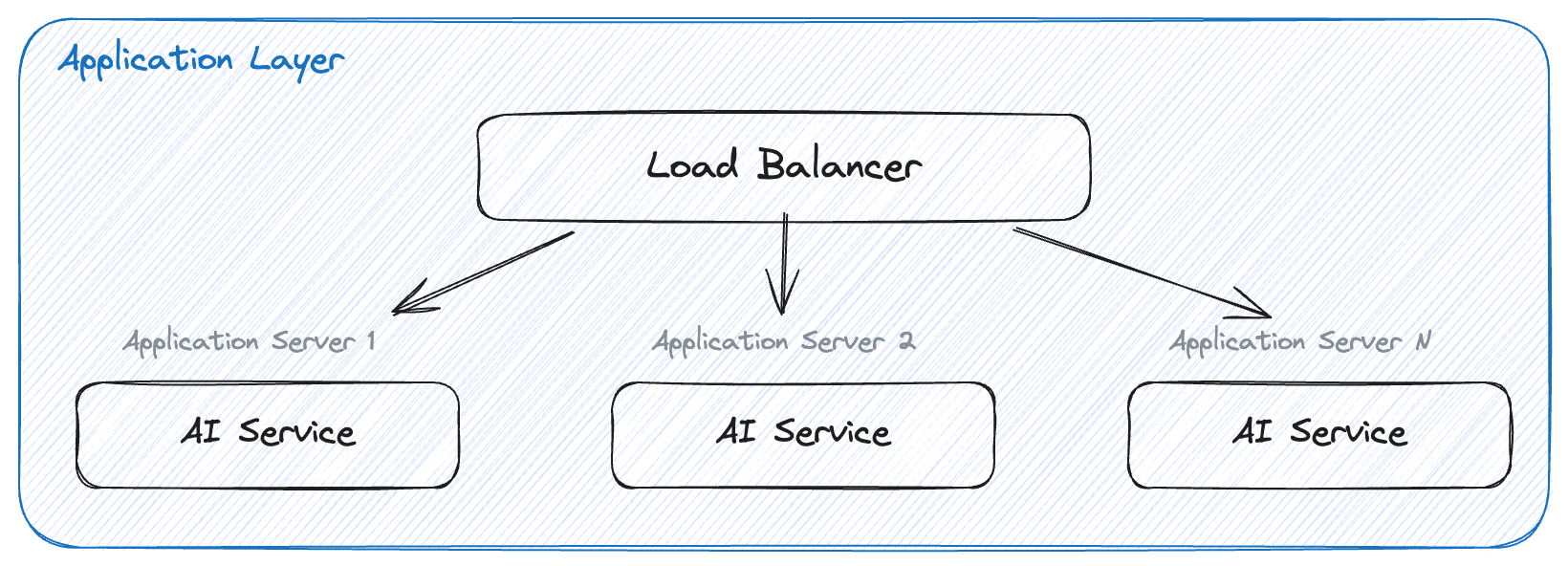

The application layer consists of one or more application server instances and, optionally, a load balancer.

Each application server runs the CKEditor AI On-Premises Docker image. You can use any Open Container runtime: Docker, Kubernetes, Amazon Elastic Container Service, or Azure Container Instances.

When running multiple instances, a load balancer distributes incoming requests across them. You can use NGINX, HAProxy, Amazon Elastic Load Balancing, Azure Load Balancer, or any other solution of your choice. Running multiple instances behind a load balancer improves both availability and performance.

Recommendations:

- Run at least 3 instances of CKEditor AI On-Premises for high availability.

- Configure the load balancer to use the round-robin algorithm.

- Refer to the SSL communication guide for setting up TLS via the load balancer.

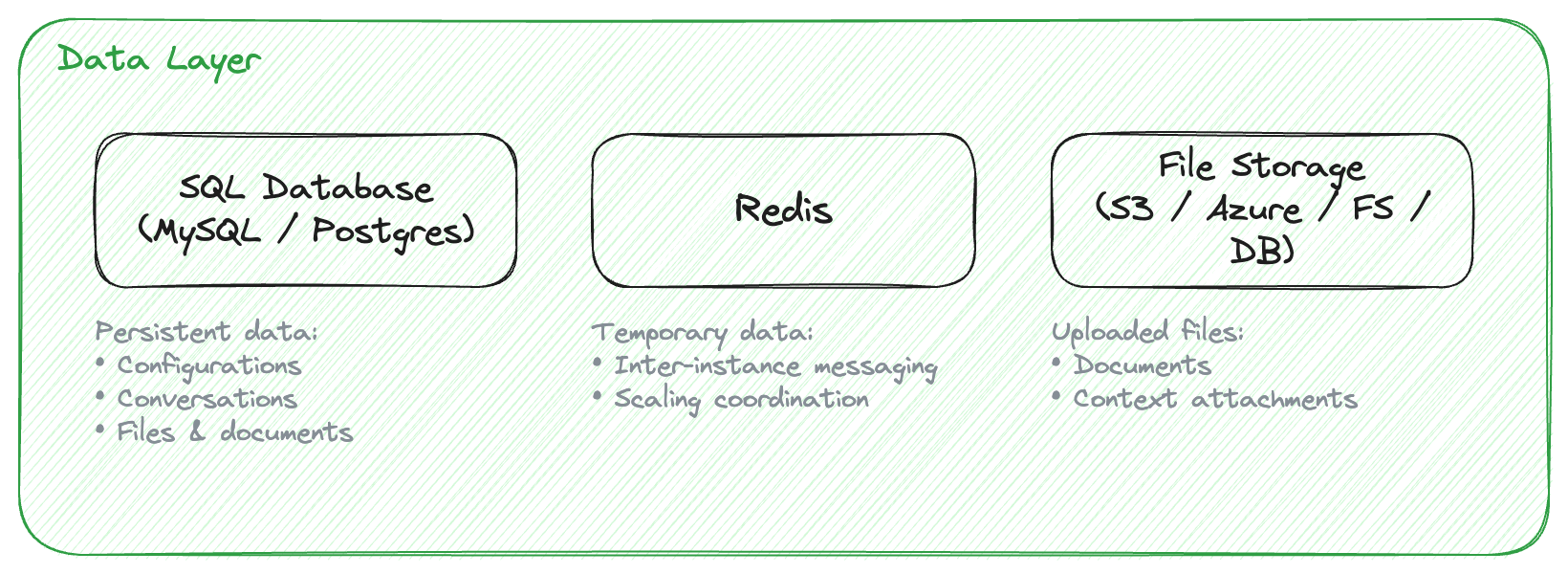

The data layer consists of an SQL database, a Redis instance, and file storage.

SQL database (MySQL 8+ or PostgreSQL 12+) stores persistent data: configurations, conversations, files, and documents. The database should be created before starting the application. Refer to the Deployment guide for database creation scripts.

Redis (version 3.2.6 or newer) handles temporary data and inter-instance communication for scaling. When multiple application instances are running, Redis ensures that data is shared correctly across all of them.

File storage holds uploaded files and documents. Supported backends are Amazon S3, Azure Blob Storage, the local filesystem, or the SQL database itself. See the Configuration guide for details.

Recommendations:

- Databases should not be publicly accessible. Run them in a separate subnet with access restricted to the application layer.

- Prepare and test a backup mechanism for the SQL database.

- Redis Cluster is supported. Redis Sentinel is not. See Connecting to Redis Cluster.

CKEditor AI On-Premises connects to external LLM providers to process AI requests. At least one provider must be configured. Supported providers:

- OpenAI – GPT family models.

- Anthropic – Claude family models.

- Google – Gemini family models.

- Azure OpenAI – OpenAI models hosted on Azure (via the OpenAI-compatible interface).

- Amazon Bedrock – Models available through AWS Bedrock.

- Google Vertex AI – Models available through Google Cloud Vertex AI.

- Custom providers – Any other OpenAI API-compatible endpoint.

The application sends requests to provider APIs over HTTPS. Make sure that outbound HTTPS traffic from the application servers to the provider endpoints is allowed by your firewall rules.

For provider configuration details, see LLM Providers.

CKEditor AI On-Premises supports OpenTelemetry instrumentation for monitoring AI interactions, token usage, and service health. Trace data can be exported to any OTLP-compatible backend (such as Jaeger, Grafana Tempo, or Datadog) and, optionally, to Langfuse for AI-specific insights. Both exports can run simultaneously.

When enabled, the application layer makes outbound HTTPS connections to the configured telemetry backends. Make sure the corresponding endpoints are reachable from your application servers.

For configuration details, see the Observability guide.

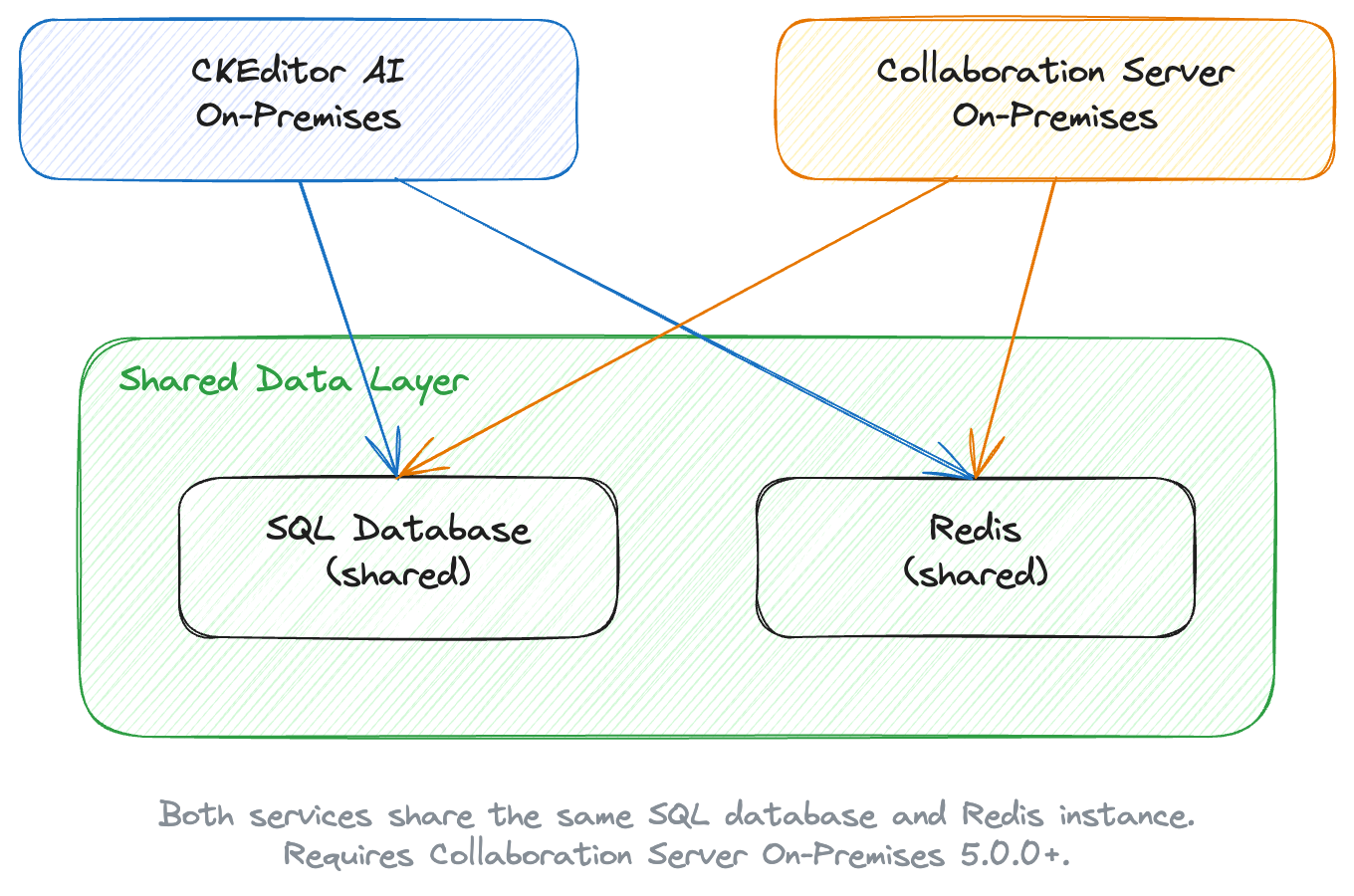

CKEditor AI On-Premises can share its data layer with Collaboration Server On-Premises. When integrated, both services use the same SQL database and Redis instance — they do not require separate databases. Environments and access keys created in the Collaboration Server Management Panel are automatically available to CKEditor AI On-Premises.

For step-by-step integration instructions, see Integration with Collaboration Server On-Premises.

- Deployment – Install and run CKEditor AI On-Premises.

- Requirements – Check hardware and software requirements.

- Configuration – Configure the application.

- Observability – Monitor AI interactions and service health.